robots.txt Had 30 Good Years. AI Privacy License is Its Replacement

Every AI model today is trained on data never legally licensed. Nabanita De built the first framework to govern what happens after your data is scraped.

By

Apr 13, 2026

Introduction to Nabanita De and Her Vision for Privacy in AI

Nabanita De, a serial technology entrepreneur, is reshaping how industries handle privacy and artificial intelligence (AI). As the founder and CEO of PrivacyLicense.ai, De is pioneering the development of the world's first privacy operating system for the AI era. This innovative platform aims to revolutionize how privacy is managed across digital spaces, laying the groundwork for the future of online security, privacy and ethical AI.

De's groundbreaking creation, the AI Privacy License, is set to transform how creators and AI companies interact, providing a shared machine readable legal framework that operates within a digital ecosystem. Described as "robots.txt with legal teeth," the AI Privacy License enables digital stakeholders to engage under legally enforceable, machine-readable standards. This innovation opens new possibilities for the AI industry, providing a scalable solution that balances innovation with ethical responsibility.

The internet has had robots.txt since 1994, a simple text file that tells web crawlers which pages to skip. For three decades, it was enough. Then came large language models, and the rules of the game changed in a way that robots.txt was never designed to handle.

By the time a crawler reaches your website, robots.txt can stop it. But what happens to the data that was already swept up, training data that now lives inside a model, shaping its outputs indefinitely? Until now, the answer was: nothing. There was no mechanism, legal or technical, to govern it.

That gap is what Nabanita De built AI Privacy License to close.

The invention

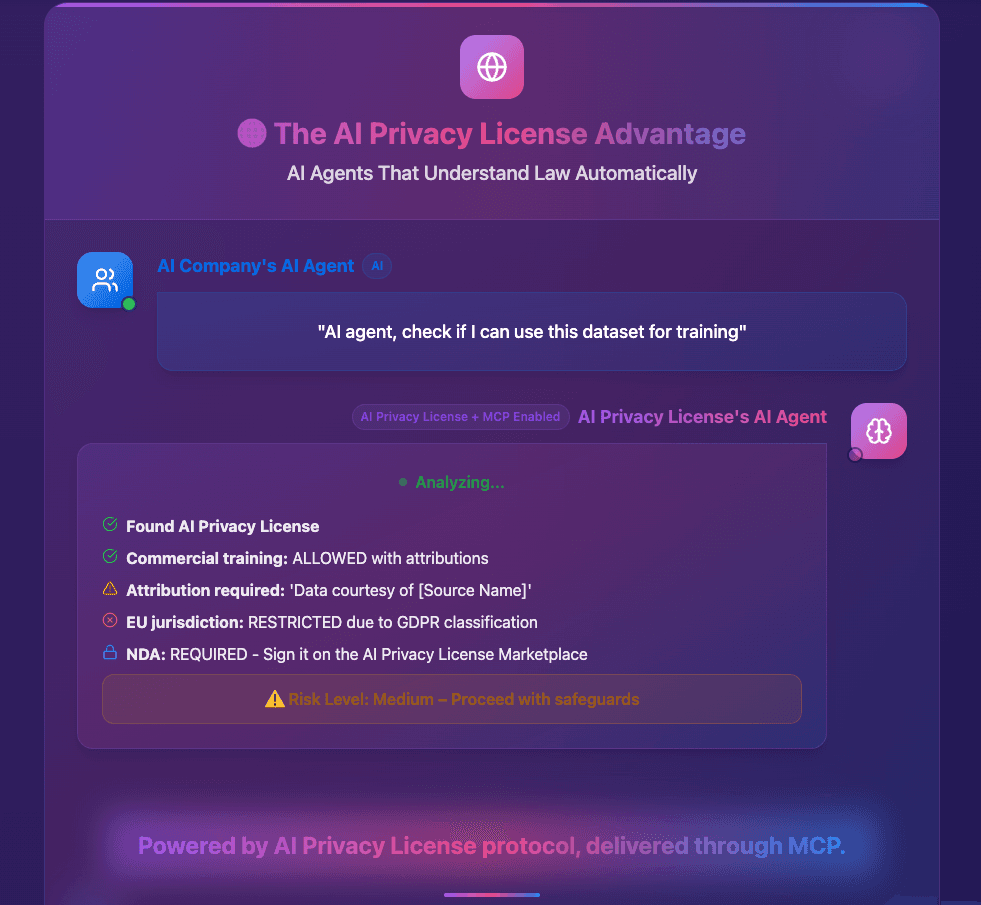

AI Privacy License is a machine-readable, legally enforceable data governance protocol, what De calls “robots.txt for the AI era, with legal teeth.” Unlike opt-out registries or terms-of-service clauses that companies routinely ignore, the license operates as a post-crawl instrument: a binding contract that follows data into AI systems and dictates the terms of its use, even after ingestion.

The protocol is designed to be embedded in any digital asset, a website, a creative work, a dataset, and understood by AI systems the same way a browser understands HTML. When an AI model encounters licensed content, the license specifies what is permitted: whether the data can be used for training, for inference, for commercial outputs, and under what conditions compensation or attribution is owed.

“The next phase of the internet must be built on trust, and this starts with privacy. The AI Privacy License is the tool that will enable a new era where creators and companies can thrive together, with privacy as a non-negotiable foundation.”

Four provisional patents cover the core mechanisms. Privacy License has attracted enterprise customers, and won the AWS GenAI Global Hackathon, a signal that the technical community recognizes the architecture as sound.

Why now, and why this matters for the EU AI Act

The timing is not accidental. The EU AI Act’s data governance provisions, now in active enforcement, require AI developers to document the provenance and permissibility of training data. For the first time, “we scraped the open web” is legally insufficient as an answer. Companies need a verifiable record of what data was used, under what terms, and whether those terms were honored.

AI Privacy License is designed precisely to produce that record. For AI labs and enterprises operating across the EU, it offers something that no internal compliance tool can provide: a standardized, externally verifiable governance layer that attaches to data at the source and travels with it through the AI pipeline.

Hollywood’s missing contract

The entertainment industry has watched AI's rise with a particular kind of dread. The unauthorized replication of actors' voices, writers' styles, and filmmakers' visual signatures has exposed a legal vacuum that traditional IP law was never designed to fill. SAG-AFTRA strikes, ongoing litigation, and congressional testimony have made clear that the industry needs more than policy promises, it needs enforceable infrastructure.

AI Privacy License offers exactly that. For creators, from Hollywood studios to independent digital artists, the protocol provides a legally binding mechanism to define the terms under which their digital likeness, voice, or creative output can be used by AI systems. Unlike a lawsuit filed after the fact, the license travels with the content itself: it tells every AI pipeline, at the point of ingestion, what is and is not permitted.

The framework can specify that a filmmaker’s visual style is available for academic analysis but not commercial replication, or that an actor’s likeness requires explicit pre-clearance and compensation before any synthetic output is generated. These aren’t theoretical protections, they are machine-readable terms that AI systems can enforce automatically, creating accountability at a scale that contract law alone cannot reach.

In an industry where a single unauthorized deepfake can generate millions in damages and years of litigation, the economic case is clear. AI Privacy License is the missing contract between Hollywood and the AI companies training on its output.

Open source: the detector that ships with the protocol

Alongside the core protocol, De has released the AI Privacy License Detector as open source, a Python library and CLI tool that lets any developer, AI company, or researcher check in real time whether a given website or dataset carries an AI Privacy License, and what its terms are.

The tool operates across five detection methods simultaneously: robots.txt declarations, HTTP headers, HTML meta tags, JSON-LD structured data, and dedicated license files at standardized paths. A two-line integration is enough to add rights-checking to any training pipeline.

The detector processes over 1,000 URLs per minute with 50 concurrent workers and is designed to integrate directly into the crawling and ingestion pipelines used by OpenAI, Meta, Anthropic, Google, and others. It ships with SSRF protection, configurable resource limits, and built-in EU AI Act compliance reporting.

The strategic logic of open-sourcing the detector is deliberate. By making rights-checking free and frictionless for AI companies, De lowers the barrier to adoption on both sides of the ecosystem: creators have a reason to generate licenses, and developers have a ready-made tool to respect them. The marketplace, where AI companies can instantly license restricted content from creators who opt in, sits at the center, turning compliance from a cost into a transaction.

Think of it as the DNS of AI compliance, De says: invisible infrastructure that every participant in the ecosystem will eventually rely on, whether they know it by name or not. The open source release is available at aiprivacylicense.com, with enterprise integrations available through privacylicense.ai.

The distribution moat

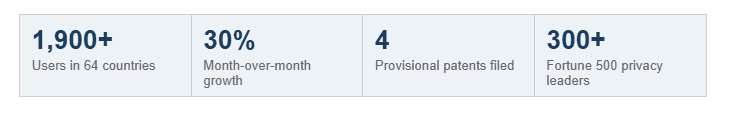

De built a community of over 300 Fortune 500 privacy leaders, Chief Privacy Officers, CISOs, and heads of AI governance, through her Privacy Champions initiative. These are the decision-makers who sit at the precise intersection of AI adoption and regulatory exposure, the people whose job it is to answer the question: “Can we use this data?”

The product reached 1,900 users across 64 countries with no paid marketing. That pattern, global organic reach from a standing start, is one De has demonstrated before. Her fake-news detection tool FiB, built during her graduate studies at UMass Amherst, reached 135 countries and won the Google Moonshot Prize at Princeton. Her earlier Bluetooth Messenger was downloaded 50,000 times. The reach is not luck; it appears to be a repeatable property of how she builds.

The founder’s edge

De spent 12 years as a privacy engineer at Microsoft, Uber, and Remitly, building the systems that handle exabytes of data for billions of users. She serves on the IAPP Privacy Engineering Advisory Board. She holds an O-1A visa for Extraordinary Ability in Privacy and Security.

This is not a founder who learned about privacy from the outside. She built the infrastructure that major companies rely on to stay compliant, and she saw firsthand where the gaps were. AI Privacy License is the product of someone who knows exactly what is missing because she spent a decade working around its absence.

ABOUT PRIVACY LICENSE

PrivacyLicense.ai is developing the world’s first Privacy Operating System for the AI era. Its flagship product, AI Privacy License, is a machine-readable, legally enforceable post-crawl data governance protocol, standardizing how AI systems interact with protected content globally. The open source AI Privacy License Detector is available at https://github.com/nabanitade/AIPrivacyLicenseDetect. The platform is available at aiprivacylicense.com

Media Contact: Nabanita De, Founder & CEO · privacylicense.ai